Open Synthetic Dataset for Improving Cyclist Detection

At Parallel Domain, we believe that collaboration, sharing, and community participation will move the field of synthetic data forward.

Visit our blog, including this blog post in which we achieved double-digit improvement on AP for cyclists using our synthetic data. We’re publishing the datasets we used – a first for our industry – allowing you to not only reproduce the results, but also use our work as a roadmap for how to effectively use synthetic data in your own pipelines.

Getting Started

To access our Open Datasets, you’ll need to create a Parallel Domain account and get in touch using the contact us page.

You can use any programming abstraction to interact with the datasets, but we recommend using PD-SDK. It’s a Python library that allows you to load scenes, frames, and annotations with simple library calls. It currently supports DGP (the format used for these datasets), nuScenes by Motional, and Cityscapes data formats.

Full API documentation on PD-SDK can be found here.

With the correct imports from the library, querying a frame is as simple as:

import matplotlib.pyplot as plt from paralleldomain.decoding.helper import decode_dataset dataset = decode_dataset(dataset_path="/path/to/the/dataset_folder", dataset_format="dgp") # load scene scene = dataset.get_scene(scene_name=dataset.scene_names[0]) # load camera image from frame frame = scene.get_frame(frame_id=scene.frame_ids[0]) image_data = frame.get_camera(camera_name=frame.camera_names[0]).image # print camera image plt.imshow(image_data.rgba) plt.title(frame.camera_names[0]) plt.show()

Background

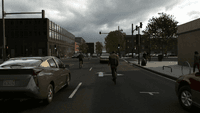

Parallel Domain creates synthetic data used to train and validate perception models for autonomous systems such as self-driving cars and autonomous delivery drones. The virtual worlds in which we capture our data are procedurally generated from real world map data, in which we spawn agents such as pedestrians, cyclists, and cars around the ego vehicle for scene capture.

Autonomous systems face the challenge of the long tail problem. Filling out that gap with real world data is by definition difficult, if not impossible. This is where synthetic data becomes incredibly useful. Synthetic data means infinite programmability. We can generate data with the exact scenarios, agents, sensor configurations, environments, weather conditions, and annotation rulesets that one desires. Solving the long tail data collection problem turns into a far simpler data specification problem.

Cyclists are perfect examples of the long tail problem. Because of the class’s rarity, the best performing models on common object detection benchmarks show 10-15% lower average precision on cyclists than on cars. If autonomous vehicles are to reduce the amount of fatalities on the road, cyclist detection is a great place to start.

To demonstrate the power of synthetic data for cyclist detection, the machine learning team at Parallel Domain published a blog post in which we supplement the popular KITTI and nuImages dataset with PD synthetic data. We see significant improvements in 2D bounding box detection for cyclists using an off-the-shelf YOLOv3 model – even when reducing the proportion of real-world data by two thirds.

By publishing this dataset, we invite you to reproduce this research to experience the power of synthetic data yourself.

Dataset Description

In the blog post, we benchmarked synthetic data alongside the KITTI and nuImages datasets. Accordingly, the downloadable archive is split into two separate datasets, each contained in their own folders and formatted according to Toyota’s Data Governance Policy (DGP):

- PD-K: 11,250 RGB images rendered matching the left KITTI color camera with corresponding 2D bounding box annotations.

- PD-N, 67,500 RGB images rendered matching nuImages cameras with corresponding 2D bounding box annotations.

For both datasets, we rendered 30 frames of the same 375 driving scenes at 2 Hz using the respective sensor configurations. Please note that we did not aim for digital twins of the real datasets, but instead created complementary datasets using the same sensors.

The scenes feature a mix of urban and suburban environments. They have varying weather and lighting conditions which roughly follow the distribution found in the original nuScenes dataset.

- 90% of the scenes are during the day

- 10% of the scenes are at night.

- 82% of the scenes have no rain.

- 18% of the scenes have rain present.

Sensor Rigs

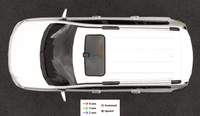

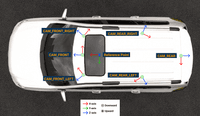

To work seamlessly with existing data, we matched virtual sensors on our ego vehicle to their real-life counterparts in the KITTI or nuScenes dataset.

We express extrinsic coordinates in meters relative to the ego reference point, defined as the midpoint of the bottom face of the chassis bounding box i.e. (vehicle length / 2, vehicle width / 2, ground).

PD-K uses a single front facing camera P2 with a resolution of 1242×375 corresponding to the left camera sensor used in KITTI after rectification. (Reference point not shown here since it is coincident to P2 from top-down view, though axes are rotated)

PD-N uses 6 cameras, each with a resolution of 1600×900. Together, they cover 360 degrees around the vehicle following the nuScenes sensor setup.

You can use PD-SDK to access the precise values of sensor extrinsics and intrinsics. Alternatively, they are stored in the “dataset/any-scene/calibration” folder.

Camera frames are JPEGs compressed with quality 75.

Annotations

One of the many advantages of using synthetic data is the ability to generate pixel-perfect annotations for objects even when they are very small or severely occluded. Depending on the use case, one might want to filter annotations before using them for training or validation in order to match the annotations guide of your real dataset.

While our blog post focuses only on Cars, Pedestrians, and Cyclists, we provide the full set of 2D bounding box annotations. Also note that we annotate bicycles and their riders separately for increased flexibility.

Distribution

The following table shows the class distribution of objects in PD-N with a bounding box height greater than 25px, visibility more than 50% and truncation of less than 95%. For the purposes of bicycle detection, cyclists are oversampled in the two datasets by a factor of ~3.5x relative to KITTI cyclists and ~70x relative to nuImages cyclists.

| Category | Annotations | Ratio of all annotations |

|---|---|---|

| Animal | 2,059 | 0.10 |

| Bicycle | 95,753 | 4.86 |

| Bicyclist | 133,602 | 6.78 |

| Bus | 1,192 | 0.06 |

| Car | 292,381 | 14.85 |

| Caravan/RV | 1,216 | 0.06 |

| CrossWalk | 41,144 | 2.09 |

| HorizontalPole | 7,911 | 0.40 |

| Motorcycle | 17,842 | 0.91 |

| Motorcyclist | 1,0176 | 0.52 |

| OtherMovable | 32,332 | 1.64 |

| Pedestrian | 646,397 | 32.82 |

| SchoolBus | 523 | 0.03 |

| TemporaryConstructionObject | 8,406 | 0.43 |

| TowedObject | 321 | 0.02 |

| TrafficLight | 200,793 | 10.20 |

| TrafficSign | 70,856 | 3.60 |

| Truck | 13,019 | 0.66 |

| VerticalPole | 393,503 | 19.98 |

| Total | 1,969,426 | 100.00 |

Metadata

In addition to the 2D bounding box annotations we provide per scene metadata which include:

- Rain intensity as a value between 0.0 and 1.0

- Wetness as a value between 0.0 and 1.0

- Cloud cover as value between 0.0 and 1.0

- Fog intensity as a value between 0.0 and 1.0

- State of the streetlights (either 0 or 1)

- Region type

- Scene type

Known Issues

Our datasets are procedurally generated, which means that we sometimes run into graphical bugs. We’ve found that they have minimal impacts on detection models, but we’ve called them out as a heads up:

- Pedestrians teleporting between frames.

- Pedestrians walking outside of sidewalks.

- Slight intersections of bike wheels with ground.

Support

For any support-related questions, please contact support@paralleldomain.com.

Citation Template

To cite this dataset, please use the following citation format:

@misc{thomas_pandikow_kim_stanley_grieve_2021, title={Open Synthetic Dataset for Improving Cyclist Detection}, url={https://paralleldomain.com/}, journal={https://paralleldomain.com/open-datasets/bicycle-detection}, publisher={Parallel Domain}, author={Thomas, Phillip and Pandikow, Lars and Kim, Alex and Stanley, Michael and Grieve, James}, year={2021}, month={Nov} }