Close the gap between simulation and reality

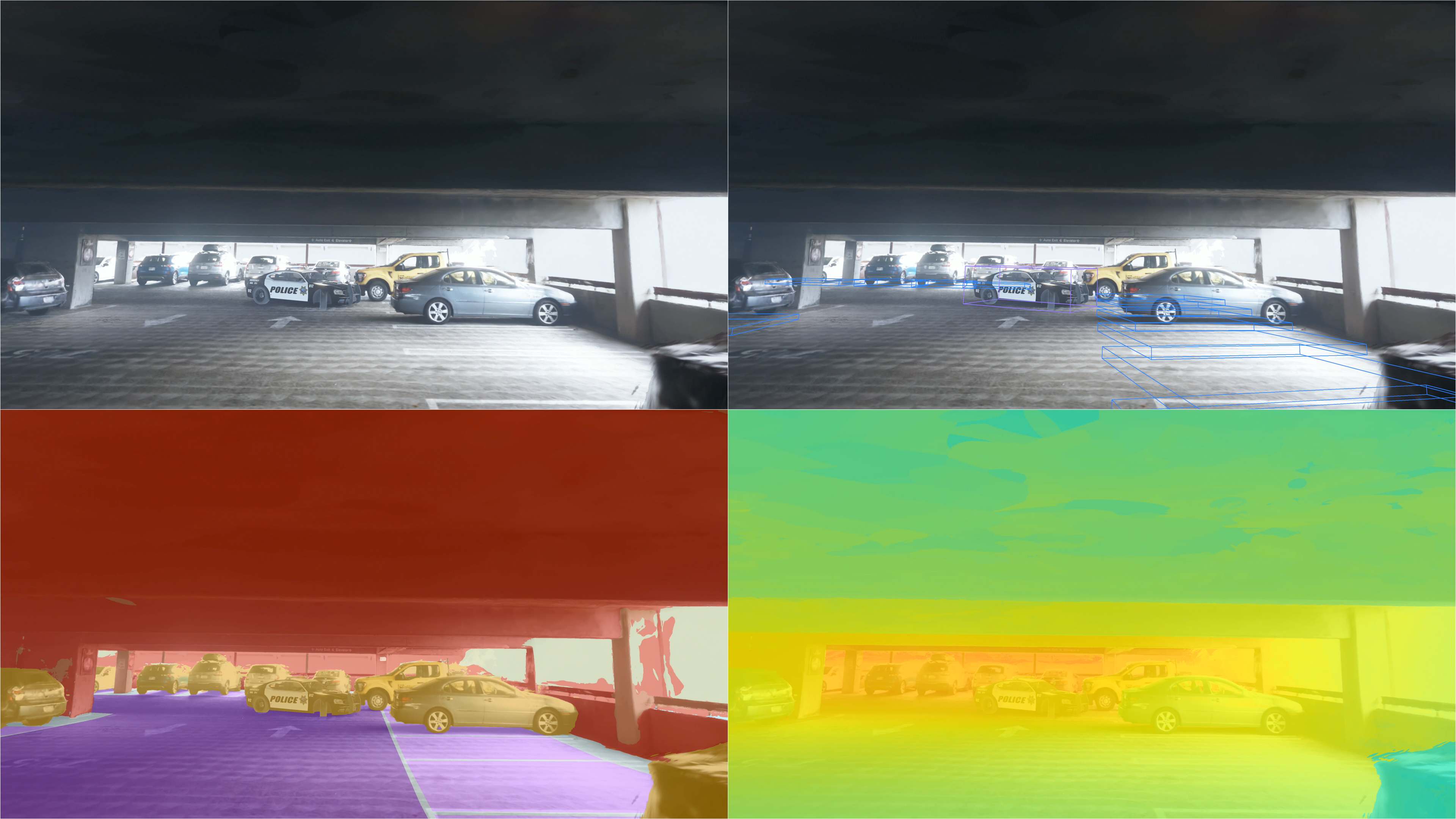

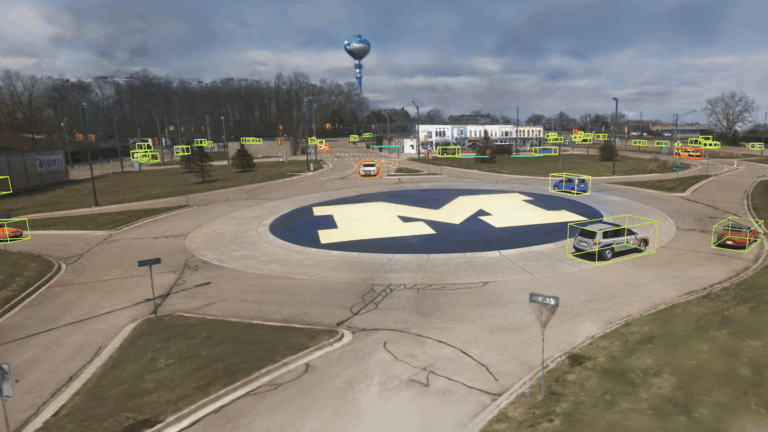

Traditional simulation rarely matches the fidelity of the real world. Parallel Domain changes that. We generate replicas from real-world capture data and deliver deterministic sensor simulation (camera, lidar, and radar) so your perception stack sees the same thing in simulation that it sees on the road, in the air, or on the warehouse floor.

Replicate the Real World

Ingest your drive or flight logs (camera, lidar, GPS) including imperfect, messy fleet data and generate a near pixel-perfect reconstruction.

Prove It's Real

Automated sim-to-real gap reports quantify how closely your replica matches the original scene. Not just "it looks good" — measurable, auditable validation for regulatory readiness.

Integrate with Your Toolchain

Our products plug into your existing autonomy stack via API. Closed-loop and open-loop testing, compatible with your CI/CD pipeline.

Scale Reconstruction and Simulation

Turn every mile driven into a reusable simulation asset. Generate thousands of test scenarios across sensor configurations, geographies, and weather conditions.

Create Variations

Modify lighting, traffic, and ego and agent trajectories deterministically. Test edge cases that real-world collection can never reliably produce.

Trusted by innovative leaders worldwide

Why teams choose Parallel Domain

Your replicas come with everything a simulator needs

World physics, HD maps, scene segmentation, dynamic agent reconstruction, object insertion, and matched lighting. Not a demo — a deployable testing asset.

Featured Resources

We support perception use cases across industries

Schedule a demo

Let’s see what breaks your model… before the real world does.